5.8 Catching Memory Defects in the Kernel — Comparison and Notes (Part 1)

Now it's time for a retrospective.

The previous sections felt like testing blades in an armory: swinging KASAN for a few cuts, thrusting with UBSAN, and finally picking up the new sword that is Clang. These scattered attempts give you a sense that "this thing is sharp," but when you hit the real battlefield—facing a mysteriously crashing production kernel—you need to know the range and blind spots of every weapon in your hands.

To save you from fumbling when the real deal arrives, let's take some time to lay out these test results side by side.

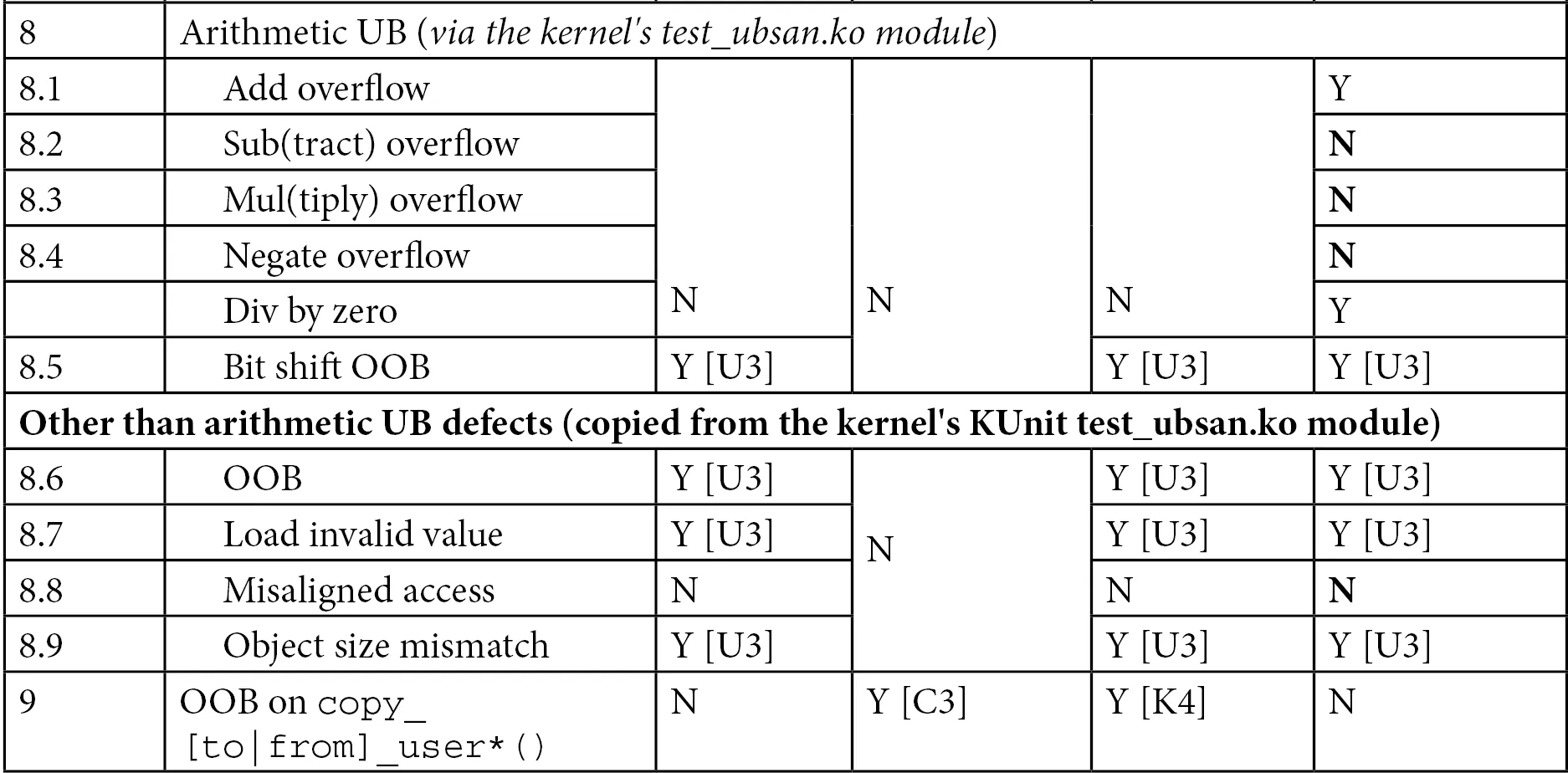

That's exactly why we have the large table below.

Table 5.5 — Summary of common memory defects and the detection capabilities of different technologies

The various footnotes in the table (like [C1], [K1], [U1], etc.) have actually been explained in detail in previous chapters. Now, let's look past the dense grid of "Yes" and "No" entries and try to distill a few core patterns:

-

KASAN is nearly an "all-rounder": For out-of-bounds (OOB) accesses on global (static) memory, stack memory, and dynamic memory, KASAN catches almost all of them. In contrast, UBSAN falls short when it comes to OOB accesses on dynamic heap memory—just look at test cases 4.x and 5.x in the table, where it is completely blind.

-

KASAN has its blind spots: It cannot see pure "undefined behavior" (UB, i.e., the 8.x test cases). But this is exactly UBSAN's home turf, where it can catch the vast majority of such hidden dangers.

-

Some bugs slip past everyone: The first three test cases—Uninitialized Memory Read (UMR), Use-After-Return (UAR), and memory leaks—leave both KASAN and UBSAN helpless. For UMR, you have to rely on compiler warnings (cranking up

-Wall) or static analyzers (like cppcheck) to save the day. As for that tricky UAR bug, we'll save it for Chapter 12, Additional Kernel Debugging Methods, where we'll dedicate a section, Using cppcheck and checkpatch.pl for Static Analysis, to demonstrate how to catch it. -

Memory leaks require a specialist: The

kmemleakkernel infrastructure is built specifically for this purpose; it can track memory leaks allocated viak{m|z}alloc(),vmalloc(), orkmem_cache_alloc()(and related interfaces).

A Few Additional Notes on the Table

Looking at this table, a few details are worth pausing to ponder:

-

Meaning of

[V1]: The table lists this as "Various." This is actually the most terrifying scenario—the system might just throw an Oops, freeze and hang completely, or even appear totally unscathed on the surface. Don't be fooled by appearances. Once there's a bug in the kernel logic, the system is untrustworthy, even if it happens to be running fine right now. This "looks okay" state is often a ticking time bomb. -

Meaning of

[V2]: This isn't expanded upon in the table, but I must give a quick heads-up. We'll save the full explanation of this detail for the next chapter in the section, Running SLUB Test Cases on a Kernel with slub_debug Disabled. There's a plot twist there that's worth looking forward to.

A KASAN Alternative — KFENCE (Kernel Electric-Fence)

When it comes to memory detection, you might be thinking to yourself: KASAN is great, but that performance overhead... I really wouldn't dare enable it on a machine running a production environment. Is there a way to enjoy the benefits of detection without worrying about dragging the machine down?

This is where KFENCE (Kernel Electric-Fence) comes in.

This is a very new tool in the Linux kernel (introduced in version 5.12, and at the time of writing, it's considered quite cutting-edge).

KFENCE has a clear positioning: a low-overhead, sampling-based memory safety error detector. It specifically watches for three notorious errors on heap memory: use-after-free, invalid free, and out-of-bounds access. It currently supports x86 and ARM64 architectures, and can hook into the kernel's SLAB and SLUB Allocators.

You might ask: Since we have KASAN, why bother with KFENCE?

That's exactly the right question. Although their functionalities overlap, their design philosophies are completely different:

- Use cases: KASAN's performance penalty is too large, dooming it to live as a pet in development/debugging environments only; KFENCE, however, is designed for production environments, with a performance overhead so low it's nearly zero.

- How it works: To catch everything, KASAN must monitor all memory; KFENCE chooses a "sampling" strategy. It doesn't watch all memory, but instead performs random spot-checks. This trades a certain degree of precision for extremely low performance overhead.

- Time versus probability: KASAN "catches all immediately," while KFENCE "will catch you sooner or later." As long as the system runs long enough (or you have enough machines running), KFENCE is almost guaranteed to stumble upon that bug.

The conclusion is simple: On development and debugging machines, blindly go with KASAN (you can also enable KFENCE alongside it); but on production machines, KFENCE is your only choice.

To enable KFENCE, you need to set CONFIG_KFENCE to y.

(⚠️ Note: Because this technology is so new, the 5.10 kernel series we use in this book doesn't have this option yet. You'll need to upgrade your kernel source slightly to play with it.)

For more details on KFENCE—including how to fine-tune it, how to interpret its error reports, and its internal implementation principles—you can dive into the official documentation: Documentation/dev-tools/kfence.rst.

One Last Addition — CONFIG_FORTIFY_SOURCE

Before wrapping up this chapter, there's one more heavy-hitting weapon to introduce.

If you upgrade your kernel to 5.18 (the latest Stable Kernel at the time of writing), you'll encounter a new, stricter memcpy() API family (covering memcpy(), memmove(), and memset()). Behind this is a kernel feature called compile-time bounds checking, which internally leverages compiler hardening capabilities.

The kernel configuration option for this feature is CONFIG_FORTIFY_SOURCE.

Once enabled, it helps catch a large class of typical buffer overflow defects at both compile time and runtime. It's like adding an invisible safety net around memory operation functions. If you want to understand just how hardcore this mechanism is, we highly recommend reading this LWN article: Strict memcpy() bounds checking for the kernel.

Chapter Echoes

Writing up to this point, our journey in this chapter has actually covered the most core paths of kernel memory debugging.

Do you still remember the questions we posed at the beginning of this chapter?

- Why are memory bugs so hard to find?

- Without rebooting the machine or destroying the crime scene, how much can we actually do?

Now you have the answers in your hands.

Although C gives us the power to manipulate everything, the price of that power is that we must manage memory ourselves—and one slip plunges us into the abyss of undefined behavior. In this chapter, we didn't just meet the various enemies you might encounter—from simple out-of-bounds accesses to bizarre undefined behavior—more importantly, we equipped an entire cabinet of weapons to deal with them.

This isn't just a simple laundry list of KASAN and UBSAN. We've established a layered defense mindset: using KUnit for automated regression, Debugfs scripts for manual probing, UBSAN to patch up logic holes, and finally switching to the Clang compiler to leverage its sharper diagnostic capabilities.

That big table (Table 5.5) isn't the finish line; it's merely an intermediate checkpoint—in the next chapter, we'll fill in data for even more tools, continuing to refine this battle map. And tools like KFENCE and FORTIFY_SOURCE show the direction of the future: even under the harsh constraints of a production environment, we still have ways to catch those hidden defects.

Reaching this point, congratulations—you've completed this long and crucial first part: the foundational training for catching kernel-space memory bugs. Don't rush to turn the page; take some time to digest this. When you feel the sword in your hand is familiar enough, the next chapter is waiting for you, where we'll complete the remaining puzzle pieces of catching kernel memory defects.